Hedgehog System Evolution!

A Blog from Mike Irving, Software Developer

By Mike Irving - Published: 23/1/2023

It has been around 6 weeks since I published my .NET, IoT and Hedgehogs! blog post, to coincide with it being featured on the .NET Advent Calendar - Day 5.

The Macclesfield Hedgehogs website also launched at around the same time.

It was really well received, and I was pleased to receive some really positive feedback about it.

A lot has changed since then, at least of a technical level.

Hibernation

Sadly, there has only been one hedgehog spotted since, and only passing by in the garden.

Whilst the hedgehogs have been hibernating, lots of mice have been visiting instead!

They even had some Christmas Decorations to enjoy through the New Year.

Thumbnails

When building the website, and then when testing it for the first time with a bulk of real data in the video feed, it became apparent that there was a performance problem.

I wasn't supplying a thumbnail graphic for video clips, so the browser was having to download the beginning of a video to then create these itself.

This isn't a problem on websites where there's only one or two videos perhaps, but when there's lots it's a problem. There is a performance hit to the browser, and an unavoidable big spike in network traffic.

Furthermore, when testing on mobile this seemed worse. The browser was sometimes cutting off the request for several of the videos, and it seems AWS S3 was putting up its own denied notice.

The initial 'quick fix' was to only load the videos at 'up to' 5 at a time, with a [+] button shown to show more. This alleviated the problem, as by the time the user had hit the button, perhaps the previous set had all been dealt with. But this wasn't a complete solution. It didn't address the processing and network traffic spikes for the most part, just slightly improved the rendering performance and user experience.

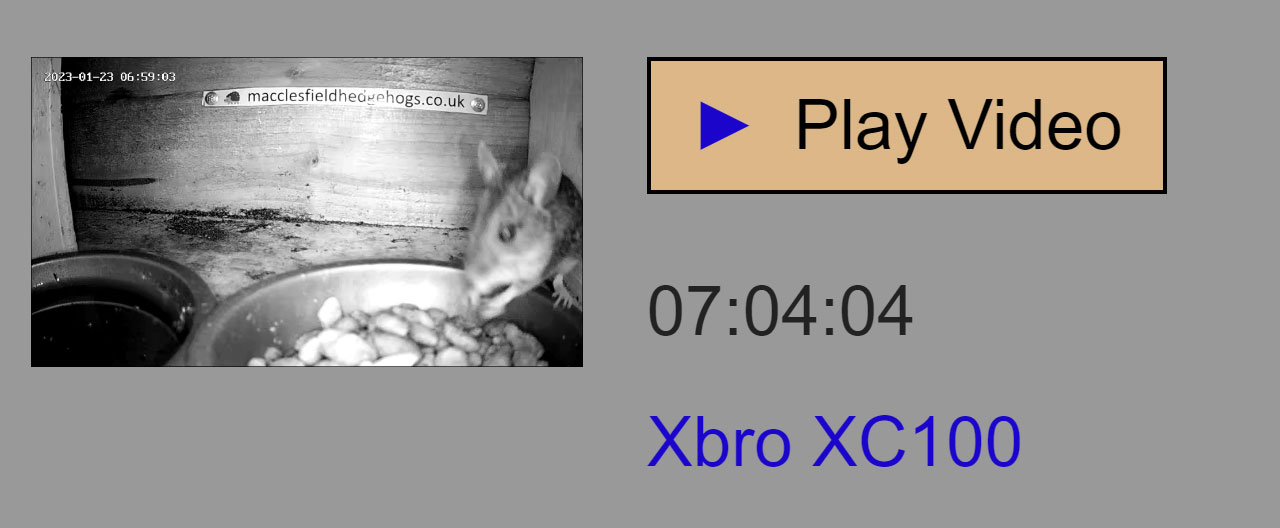

The HTML video Tag supports thumbnails via the poster attribute, supplied as a URL to a graphics file.

Working out the how, when and where of creating such images would be my next major technical challenge.

Looking at NuGet, it seemed most thumbnail creation packages were using FFmpeg to create thumbnail images from video files.

I had a look at a few of these, with examples, and decided upon Xabe.FFmpeg.

I decided this would be done as another AWS Lambda Function. I'd examine the files in the S3 Bucket, then work out which video (MP4 files) didn't have a corresponding image (JPG file) and create one.

This would involve downloading a video to temporary folder, creating a thumbnail based on the first frame.

Once all new videos (those without a graphic) had been processed, I'd upload the graphics to S3, and delete the temporary files, the restart the existing Lambda Function to recreate the JSON Video List used by the website, which has now been tweaked to only list files that have a thumbnail ready.

The resultant function, drawing on my S3BucketExperiments code is fairly straightforward.

This ran great locally on Windows, but how would I get FFmpeg uploaded and configured in the Linux environment that runs Lambda?

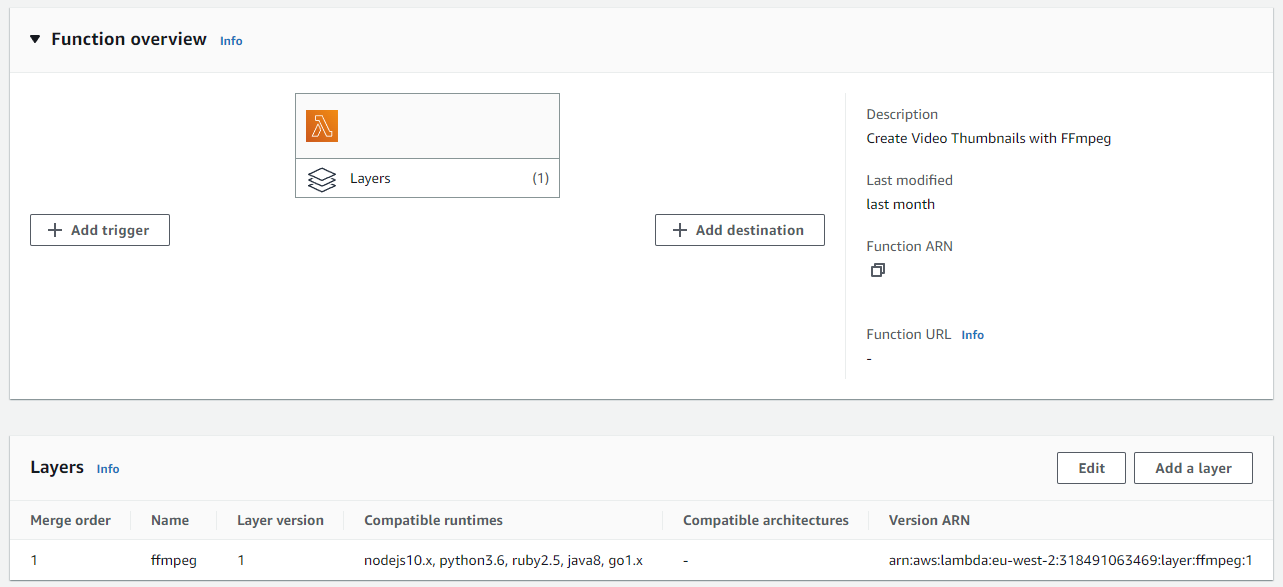

Fortunately, AWS have a simple solution for this, using Lambda layers.

There are several layers already available for FFMpeg, for example ffmpeg-lambda-layer.

All I had to do was to create a layer deployment to my environment, then in my Lambda function, add this layer.

With a slight adjustment to my function, to use the provided temporary path on Linux, /tmp, my function then worked on AWS Lambda!

These files are now in use on the website.

I altered my automation flow to run this function after new files were uploaded, then on completion run the function to create the file lists for the website.

Website alterations

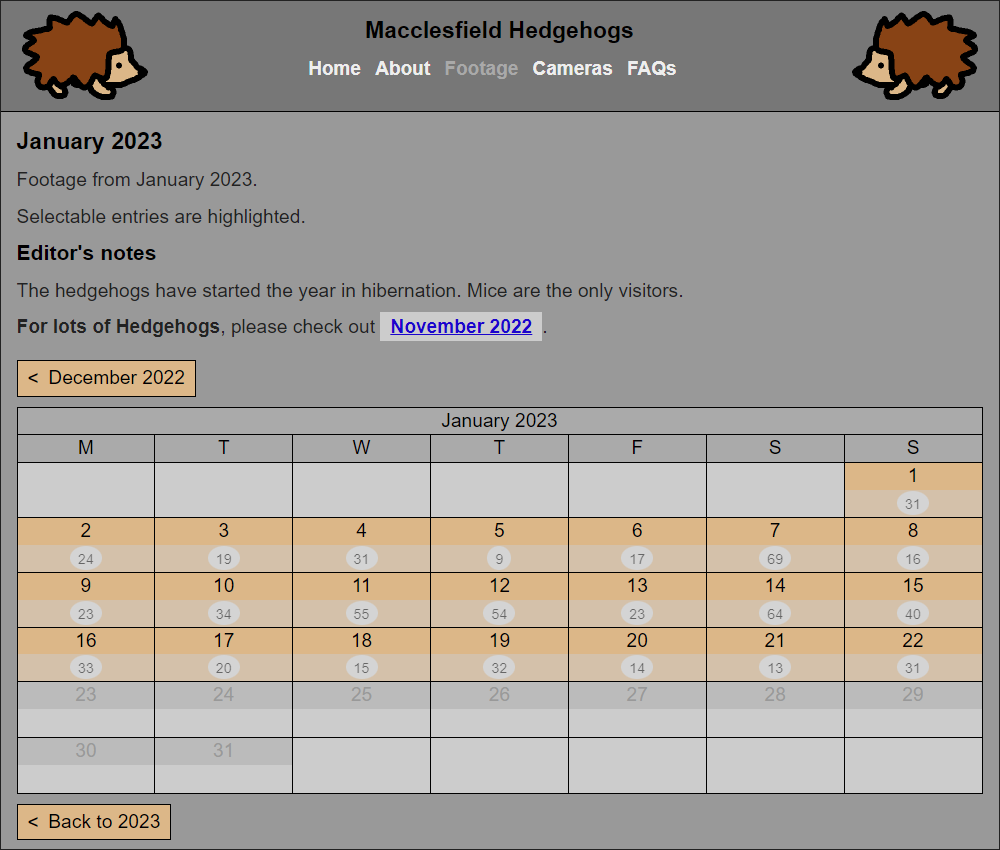

Various alterations have happened on the Macclesfield Hedgehogs website since the initial launch, including the addition of the aforementioned thumbnail images.

There have been some cosmetic improvements, and some changes to the mobile experience.

I have also added an FAQs page, which I hope to expand on.

Given the lack of hedgehogs in December, and through January, I decided to add some Editor's notes to each month page, to provide some background to what footage there is, or direct users to look on different pages. I have added similar notes to the home page too.

Aside from the video files and index, everything is still running upon GitHub Pages, and the repository is open source.

Raspberry Pi

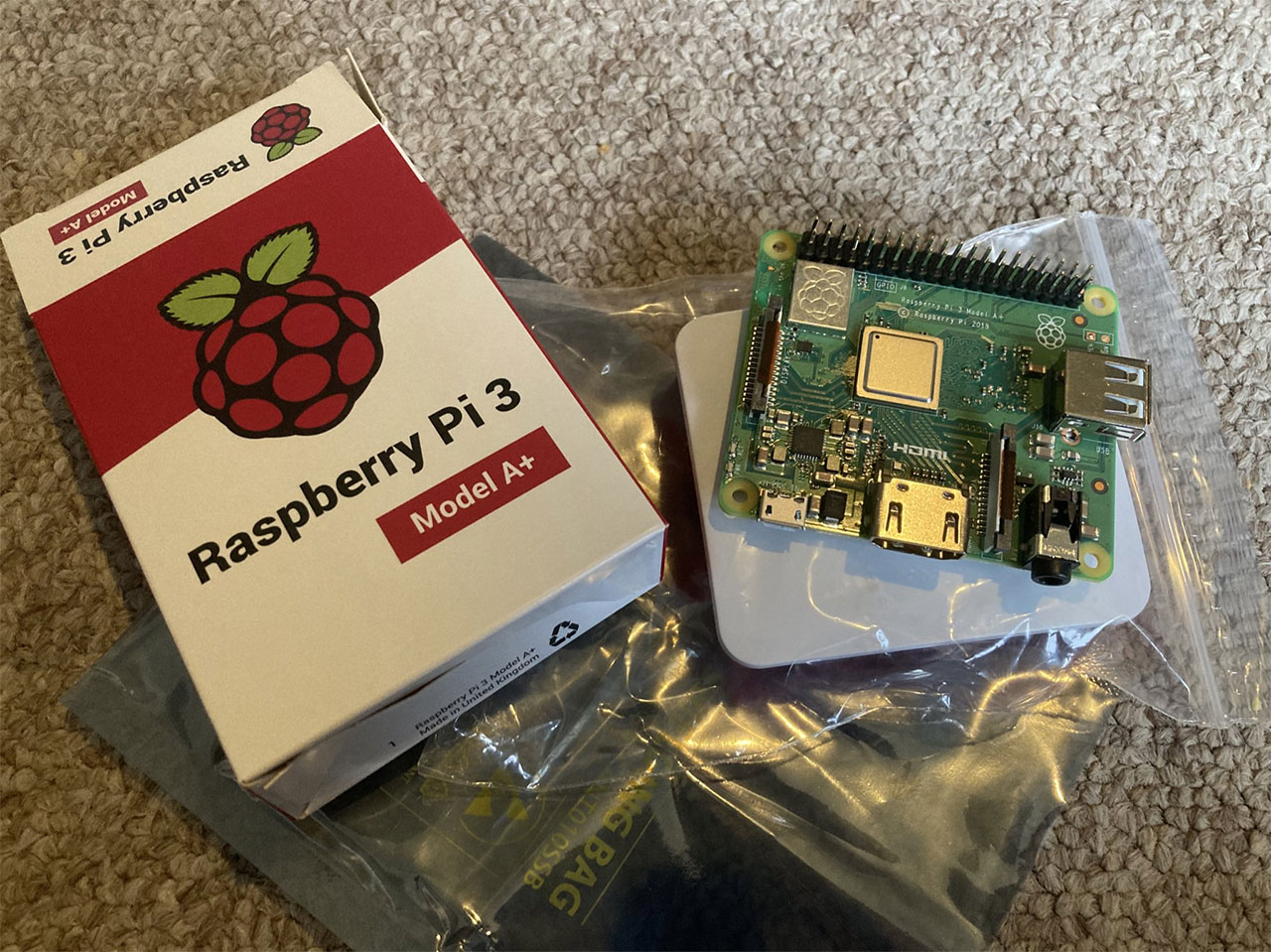

It was always my aspiration to get the project code running on a Raspberry Pi computer, but time constraints and the global supply shortage stopped this from happening.

I needed a Raspberry Pi that could run .NET 6 or 7, on a 64-bit operating system, so that was a Raspberry Pi 3, 4 or Zero 2 W.

Just after Christmas, the supply issues eased, and I was able to order a Raspberry Pi 3 - Model A+, and case, and the journey began!

Linux

I am running the Raspberry Pi OS Lite operating system, 64-bit. This is an official Linux distribution, based on Debian, that doesn't have the desktop environment, which I do not need.

I did some reading and experimenting with ways to get the .NET Runtime onto a Pi, including following the official documentation here, and looking at some automatic installation scripts from Pete Gallagher, available here.

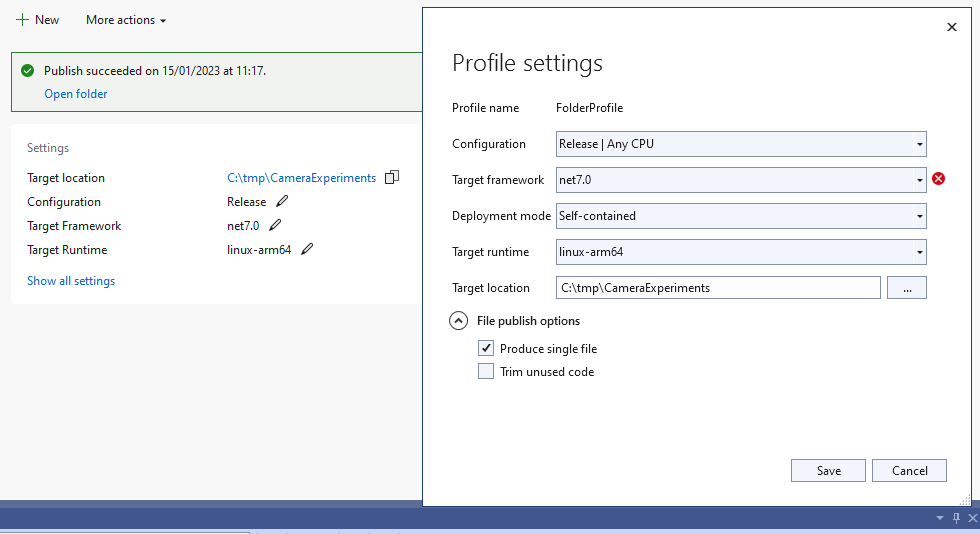

There is also the option of deploying self-contained apps, which are compiled to include the used parts of the framework in a single executable, when compiling for linux-arm64.

I am now using this option, for convenience, when deploying release builds.

once published, I can easily deploy a build using scp.

scp -r C:\tmp\CameraExperiments administrator@raspberrypi:/home/administrator/programs/

The S3BucketExperiments code needed minimal adjustments for Linux, just to cope with Linux file paths and formats.

However, the CameraExperiments code would need extensive work.

On Windows, the Wi-Fi functionality used SimpleWifi, and the Bluetooth functionality used InTheHand.BluetoothLE.

Both of these packages are incompatible with Linux.

I spent a long time experimenting with different solutions, and what I have now is functionality to call out to Linux CLI commands, and interact with them, to achieve the connections I need.

It is my understanding that this sort of thing is quite common in hobbyist IoT setups.

For Example, the modified C# Code for Bluetooth on Linux.

Which calls out to a Script file, also in the repository.

This code has gone through several iterations, and will likely continue to evolve.

One day I'd like to return to a true cross-platform solution, everything in C#.

Automation / Scripting

I now have the camera connection functionality, then the upload functionality, running automatically on boot up of the Pi, if booted before a certain time of day.

This is achieved by an alteration to the /etc/rc.local file, which also makes sure the date and time are correct and waits for a short while before executing to make sure Networking and Bluetooth are ready.

If the time condition is met, this then runs a further script to kick off the processes, and then shutdown again.

I hope to have the Pi boot automatically on a timer at some point, and it may go outdoors as part of the forthcoming solar setup.

For now, the Pi sits next to my PC, for further monitoring and tweaks, and happily runs each morning.

Example output (sequence shortened).

System Alerts

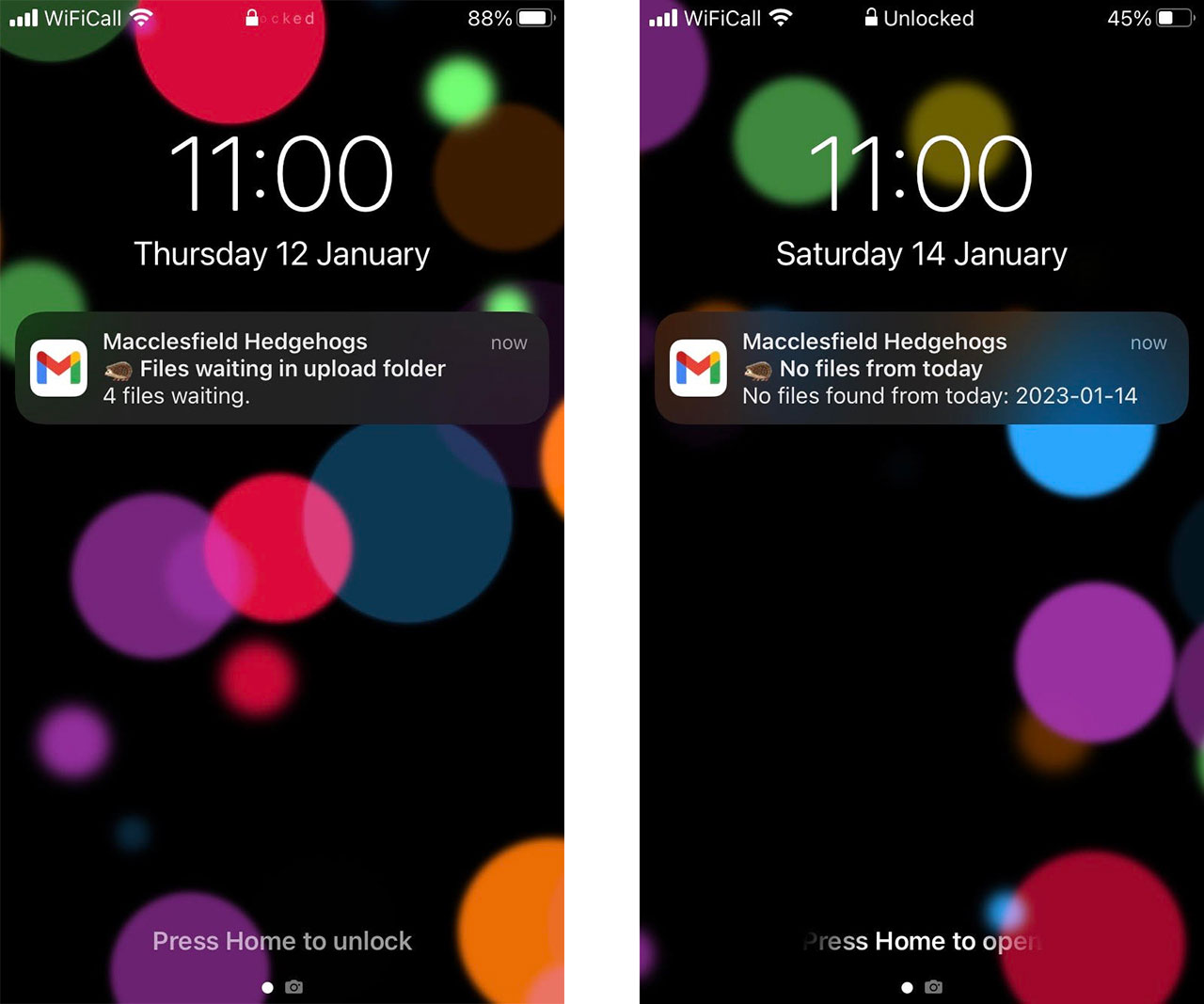

The system now alerts me if there has been no new footage, or if there are files stuck in the upload folder, by a set time each day (currently set at 11am).

This is done by yet another AWS Lambda Function, which examines the S3 Bucket for the latest footage, and sends me an email when neccessary.

I am finding this to be a useful reminder to take action, when needed, arriving on my iPhone to alert me.

I will likely tweak these and add alerts for other system eventualities in the coming months.

Next Steps

The system and the setup are constantly evolving.

I am making tweaks and changes to the code on a weekly basis, often to add functionality, but also to improve efficiency and prevent errors.

One of the cameras, the Xega RBX-S50 still needs setting up, and I have a setup planned in the garden to run several cameras, and possibly the Raspberry Pi, from a battery connected to a small solar panel.

I am still planning this, and it will likely happen once the winter weather passes.

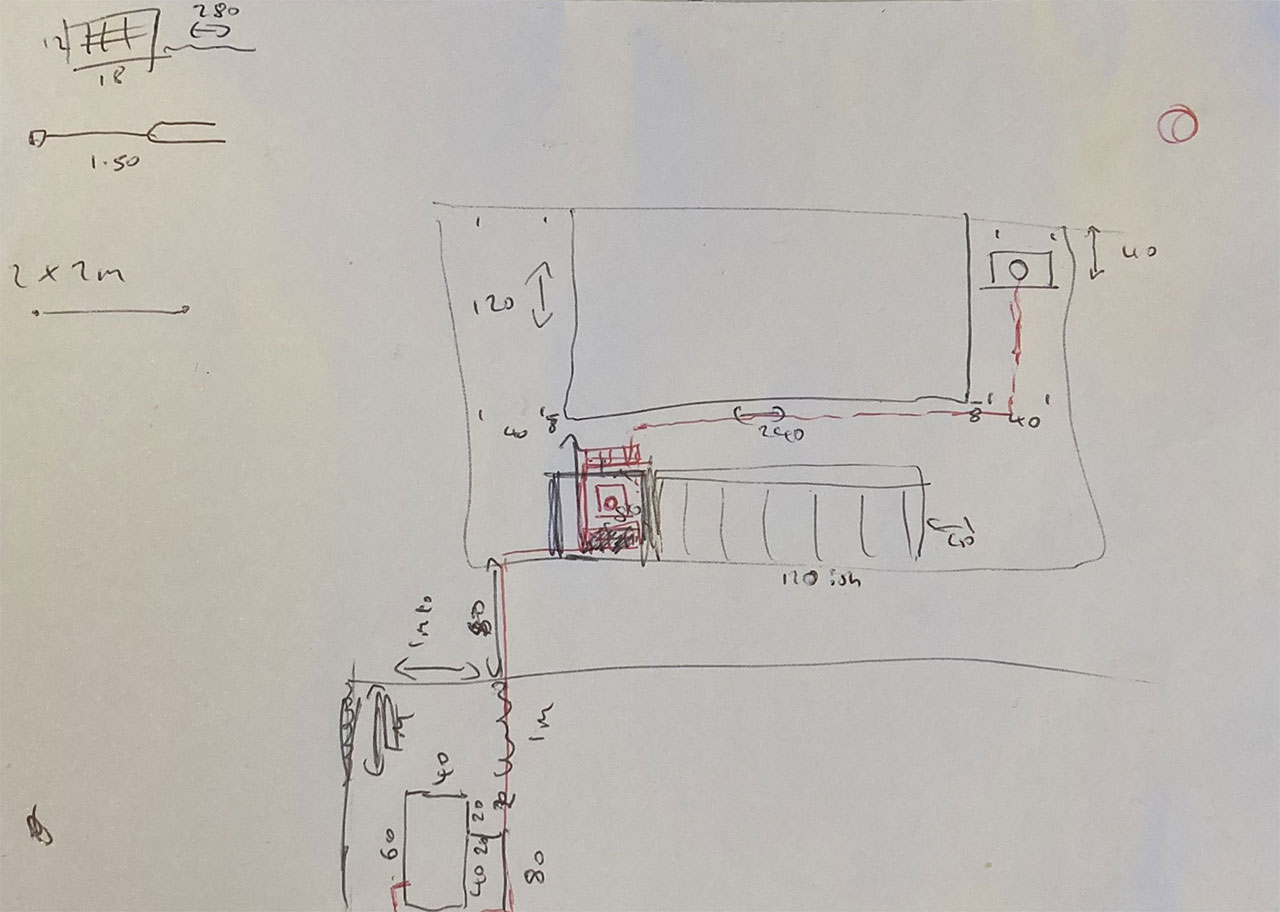

For now, there are a series of highly technical diagrams of the setup, such as this one 🙂

I also want to bring further cameras into the automation realm.

For the cameras that I cannot find a programmatic interaction for, I think I have a solution...

SD Cards are available, including the Toshiba FlashAir range that have built in Wireless LAN.

These are only available in full size SD, not Micro SD, but I can provide compatibility back to Micro SD via a ribbon cable adapter.

I am hoping to provide new functionality in my CameraExperiments repo, to provide access to the applicable cameras in a generic way.

I now have a set of these cards, and experiments with them are ongoing, likely presenting the next technical chapter in this journey!

Further afield, I may incorporate Machine Learning into the process to work out which video files actually contain hedgehog (or mice) footage, and which can be discarded, reducing the need for human editing of each day's uploads.

23rd Mar 2023 - Follow up article added: Hedgehog Hardware and Detection

View Blog Entries...

Page: 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11